The Hidden Gap in Digital Vision

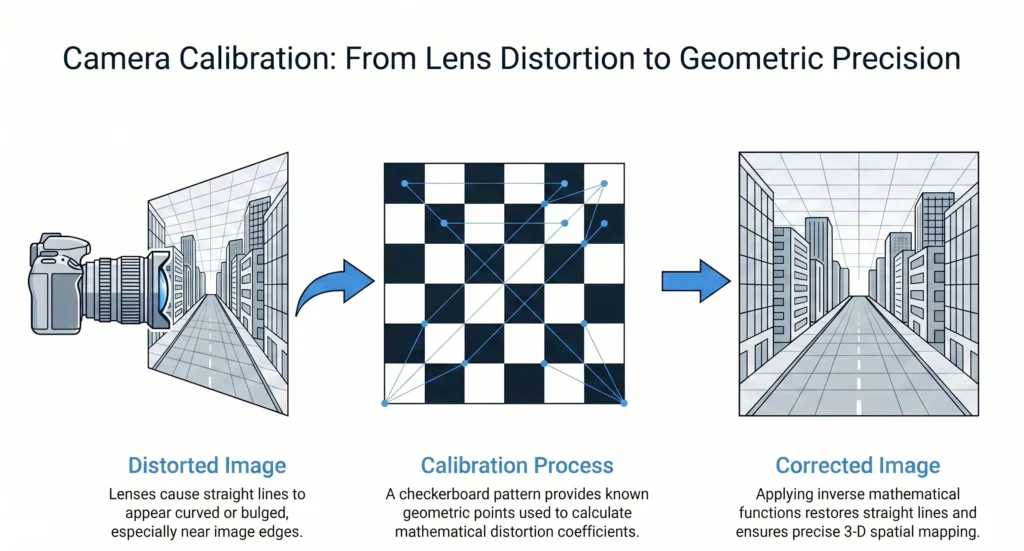

When we look at a digital image, we usually assume it reflects reality. However, every camera introduces a small but important gap between what exists and what is captured. This happens because light does not travel perfectly through a lens. As it passes through glass and reaches the sensor, it bends slightly. As a result, straight lines can appear curved, and distances can shift by small but critical amounts. For humans, these distortions are rarely noticeable. But for machines, they can cause serious problems. A robot may misjudge distance. A system may misinterpret shape. Even a few pixels of error can affect accuracy.

This is where camera calibration becomes essential. It works as a correction process that reduces this gap and aligns the digital image with the real world.

Understanding Camera Calibration: The Eye vs the Lens

To understand camera calibration, it helps to compare a camera with the human eye. The human brain constantly adjusts for perspective, depth, and distortion. It corrects visual errors without conscious effort. Because of this, we rarely notice imperfections in what we see. A camera does not have this ability. It records everything exactly as captured, including distortions. Camera calibration gives the system a way to understand its own vision. It defines how the camera behaves and how it relates to the physical world.

This is done using two types of parameters:

- Intrinsic parameters, which describe the internal properties of the camera, such as focal length and sensor center

- Extrinsic parameters, which describe the position and orientation of the camera in space

Together, these parameters help create a consistent mapping between the real world and the captured image.

How Camera Calibration Connects Two Worlds

Camera calibration links a three-dimensional world to a two-dimensional image. The real world has depth, width, and height. A camera sensor, however, records only height and width. This creates a loss of depth information. Calibration helps restore this relationship through geometry.

First, the camera forms an image using perspective projection. Objects that are farther away appear smaller, while closer objects appear larger. This is a natural effect, but it must be measured correctly.

Second, the system corrects distortions introduced by the lens. These distortions are not always uniform. In many cases, they become stronger near the edges of the image.

To correct this, mathematical models adjust the position of pixels. The goal is to make the digital image represent the real scene accurately.

In real-time systems, this process must be both fast and precise. Slow or inaccurate correction can lead to incorrect decisions.

Why Accuracy is Non-Negotiable

In many applications, small errors can have large consequences. In robotics, incorrect calibration can lead to navigation errors. A robot may misjudge distance or collide with objects. In autonomous vehicles, accurate measurement is critical. Even minor distortion can affect how the system detects obstacles. In AR and VR, digital objects must align with the real world. If calibration is off, objects appear to drift or float. This breaks the illusion and reduces usability. These examples show that camera calibration is not optional. It is necessary wherever machines rely on visual data.

From Checkerboards to Flexible Models

Camera calibration has changed significantly over time. Earlier methods relied on controlled setups. A common approach used a checkerboard pattern placed in front of the camera. By capturing this known pattern from different angles, the system calculated camera properties. This method worked well, but it required time and controlled conditions. Modern approaches are more flexible. Researchers now use models that do not assume perfect symmetry in the lens. Instead, they allow for variations across different parts of the image. Some methods even use real-world scenes for calibration. Straight lines in buildings or roads can act as references. This removes the need for special patterns.

The Shift Toward Intelligent Calibration

Recent developments in deep learning have introduced new possibilities. Some systems estimate camera parameters directly from images. They analyze visual patterns and predict how the camera behaves. Other approaches go further. Instead of calculating parameters, they learn how to correct the image directly. This method focuses on transforming a distorted image into a corrected one at the pixel level. This is often called blind calibration. It allows systems to fix images even when the camera details are unknown. These approaches make calibration more practical and adaptable in real-world situations.

Conclusion: Bridging Vision and Reality

Camera calibration is a fundamental part of how machines understand the visual world. It reduces distortion, restores accuracy, and connects digital images to physical reality. Without it, systems cannot measure or interpret their environment correctly. As technology continues to advance, camera calibration is becoming more automated and intelligent. However, its purpose remains the same: to ensure that what a machine sees is as close to reality as possible.